E4: The Linguistics of Machines: LLM and NLP

Human and Machine Communication through Deep Learning Techniques

Introduction

As the technology continually evolves, as you can probably have noticed

WE CAN NOW TALK WITH MACHINE!!!!

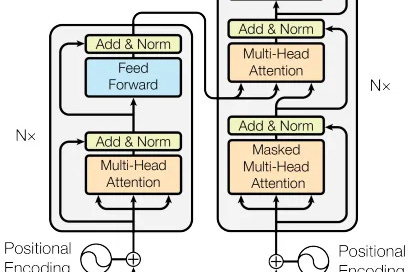

The journey so far, through Machine Learning and Deep Learning, has set a good foundation into how machines interpret and generate human language. In this episode, I will try to explain how Large Language Models (LLMs) and Natural Language Processing (NLP) works. This technique allows the fusion of linguistic and machine learning principles to foster a more nuanced interaction between humans and computers.

This new chapter of AI Odyssey It's about to show how we can teach machines to understand and respond to textual data, mirroring human-like understanding to a significant extent. This exploration is all set to unveil the architectural designs and the underlying mechanisms that help machines process textual data effectively, thereby enhan…

Keep reading with a 7-day free trial

Subscribe to toString() to keep reading this post and get 7 days of free access to the full post archives.